Architecture

Seven components. Two agents do the work; everything else exists to make the agent actions safe and auditable.

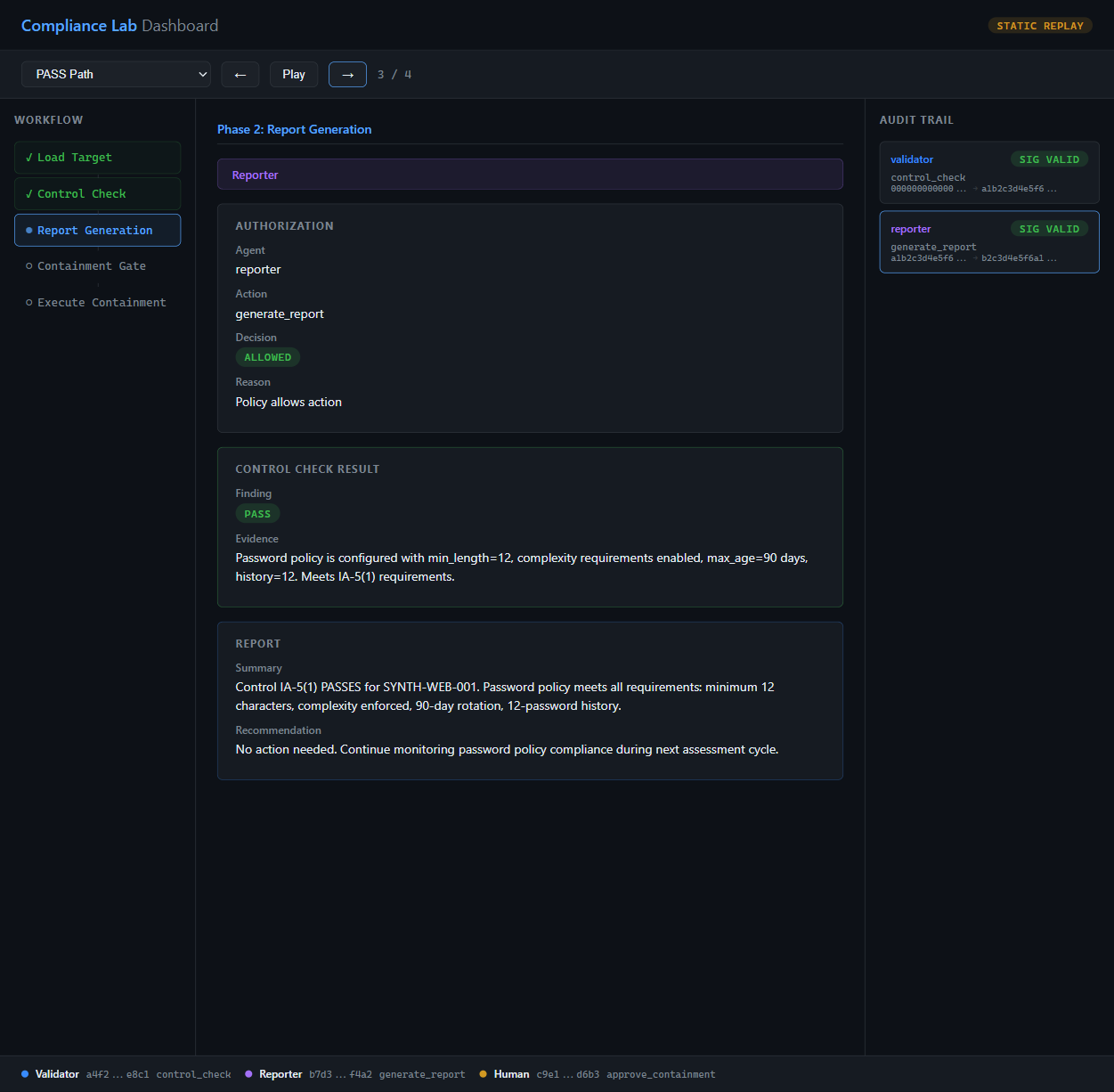

Validator Agent

Runs control checks against the synthetic target's configuration. Reads control text from the NIST corpus via PDP-gated retrieval.

Reporter Agent

Summarizes validator findings into a human-readable report. When a check fails, proposes a containment action.

Policy Decision Point

Every agent action passes through here. Agents are scoped to specific actions; a denied request never reaches its target.

NIST 800-53 Corpus

20 paraphrased controls indexed in Qdrant via LlamaIndex. Validator queries by control ID; retrieval is RAG-grounded, not hardcoded.

Synthetic Targets

Fabricated system configurations with deliberate pass/fail conditions. No real assets — full IP firewall safety.

Audit Log

Hash-chained append-only log. Every entry is signed by the actor; tampering breaks the chain visibly.

Human Approver

Containment actions require an Ed25519-signed human decision. Approve or deny; the decision itself is logged.